Related work

Previous approaches to OA classification using KAE data predominantly relied on hand-crafted feature extraction combined with conventional machine learning algorithms. Researchers have explored statistical descriptors such as entropy, signal energy, zero-crossing rate, and spectral centroids, among other time and frequency domain features, to characterize the differences in acoustic emissions between healthy and osteoarthritic joints. These features are then typically input to classifiers, including support vector machines, random forests, Gaussian mixture models, k-nearest neighbors, or shallow multi-layer perceptrons to discriminate between disease states14,15,16,17,18,19,20. While these methods offer some interpretability and are well-suited for small and controlled datasets, their reliance on manual feature engineering often results in sub-optimal and dataset-specific representations that limit generalizability and robustness across heterogeneous populations and recording devices. Moreover, these hand-crafted features may fail to capture subtle, complex patterns in the KAEs that are critical for accurate OA detection, underscoring the need for more data-driven and automated feature-learning approaches.

In recent years, deep neural networks, have emerged as transformative tools in biomedical research due to their ability to automatically learn hierarchical and task-relevant representations from raw and minimally processed data. Unlike traditional methods that depend heavily on expert-driven feature engineering, deep learning models, specifically residual networks (ResNets)21, are capable of uncovering intricate structures and latent dynamics inherent in complex biomedical signals, including imaging, electrophysiological, and audio data22,23.

Originally developed to address image classification challenges, ResNets have gained prominence in biomedical research due to their superior capacity to effectively train very deep models and mitigate the vanishing gradient problem encountered in convolutional models. Unlike conventional convolutional neural networks (CNNs), which directly learn hierarchical representations via convolutional operations, ResNets introduce skip connections enabling the network to capture residual mappings of the input data. Formally, convolutional operations within these blocks can be described mathematically as,

$$\begin{array}{l}{{\bf{S}}}^{c}(i,j)=({\bf{I}}* {{\bf{K}}}^{c})(i,j)+{{\bf{B}}}^{c}(i,j)\\ \,\,\,\,\,\,\,\,\,\,\,=\mathop{\sum }\limits_{m=0}^{M-1}\mathop{\sum }\limits_{n=0}^{N-1}{\bf{I}}(i+m,j+n)\,{{\bf{K}}}^{c}(m,n)\\ \,\,\,\,\,\,\,\,\,\,\,+{{\bf{B}}}^{c}(i,j).\end{array}$$

(1)

where I is the input matrix, c ∈ C indexes the different kernels the model learns simultaneously. Kc and Bc(i, j) are the convolutional kernel of size M × N and the bias term, related to the kernel c respectively. Sc(i, j) is the scalar output at spatial position (i, j) associated to the kernel c.

In each layer of a ResNet, instead of only learning a direct input-output mapping, the network explicitly learns a residual mapping expressed mathematically as,

$${{\bf{S}}}_{\ell }={f}_{\ell }({{\bf{I}}}_{\ell })+{{\bf{I}}}_{\ell }$$

(2)

where Iℓ is the input to the block ℓ, and fℓ(Iℓ) represents the nonlinear transformation learned by a sequence of convolutional, batch normalization, and activation layers associated with the block ℓ. This architecture allows deeper networks to be effectively trained while solving the vanishing gradients problem inherent in CNNs.

ResNet variants have been widely adopted in biomedical applications, achieving strong results not only in image classification tasks but also in the analysis of biomedical signals24. In the domain of sound signal classification, a common approach involves converting the raw audio or biosignal data into time-frequency representations such as spectrograms or Mel-frequency cepstral coefficients, which can then be fed into pre-trained or custom ResNet architectures for feature extraction and classification25,26. This technique leverages the convolutional structure of ResNet to extract spatial and temporal patterns from the spectrogram, facilitating robust recognition of complex acoustic signatures found in biological data. The proven effectiveness of ResNets in classifying heart, and lung sounds highlights their versatility and strong potential for advancing automated OA diagnosis from KAEs.

Despite the remarkable success of deep convolutional neural networks in various biomedical domains, their performance often hinges on the availability of large, diverse labeled datasets—a condition rarely met in specialized biomedical applications such as KAE analysis. Insufficient data can result in overfitting and suboptimal generalization, limiting the practical utility of these models in clinical settings24,27. In other domains, data-efficient deep learning has been explored through active learning, and synthetic data generation to alleviate annotation and data scarcity constraints28,29. To address similar challenges in our setting, transfer learning has emerged as a powerful strategy, wherein knowledge learned from large-scale datasets (often from related domains, such as general audio or image datasets) is leveraged to improve performance on target tasks with limited data30. By fine-tuning pre-trained networks on smaller, domain-specific datasets, transfer learning not only accelerates convergence and enhances stability but also improves feature robustness and reduces the risk of overfitting, even in data-scarce scenarios24,31. This approach has been widely adopted across the literature, enabling more accurate and reproducible results in diverse biomedical tasks ranging from medical image analysis to biosignal classification.

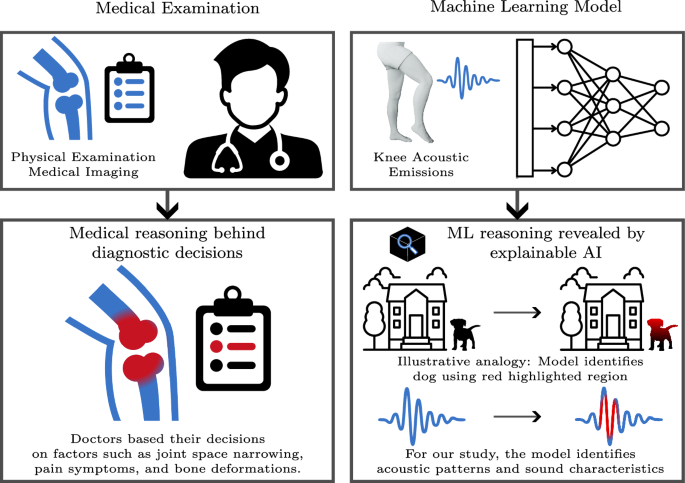

Another critical aspect of advancing biomedical research is to build confidence among clinicians and the broader medical community that deep neural networks are not merely extracting noise, but are genuinely learning and utilizing meaningful semantic patterns from data. This need for trust has driven the demand for greater transparency and interpretability in predictive models. In response, XAI methods (e.g. GradCAM32, GradCAM++33) have emerged as essential tools for providing insights into the decision-making processes of complex neural networks, thereby enabling researchers and clinicians to validate model reliability, mitigate potential biases, and foster greater trust in automated systems34,35. Techniques such as saliency maps, layer-wise relevance propagation, and gradient-based attribution have been widely adopted to identify the most informative regions of input data that contribute to a specific prediction, whether in medical imaging, genomics, or physiological signal analysis36,37. The adoption of XAI in the biomedical community is accelerating, as these methods not only facilitate regulatory compliance and clinical acceptance but also aid in scientific discovery by uncovering novel biomarkers and patterns within complex datasets38. As a result, explainable AI is becoming a cornerstone for ensuring transparency and interpretability in state-of-the-art biomedical machine learning pipelines.

Among the growing suite of explainable AI methods, Full-Gradient (FullGrad) stands out for its comprehensive approach to attribution, as it combines both the neuron gradients with respect to the input and the bias contributions throughout the entire network39. Unlike conventional gradient-based techniques that may overlook important contextual information present in deep residual architectures, FullGrad captures relevant patterns from all layers, thereby producing more robust, interpretable, and fine-grained attribution maps. Formally, for a network defined as,

$${\mathcal{F}}(x)={f}_{L}\circ {f}_{L-1}\circ \cdots \circ {f}_{1}(x),$$

(3)

where fℓ, ℓ = 1, …, L, denote the individual layers. The FullGrad saliency map (i.e. \({\mathcal{G}}({\bf{I}})\)) for this network can be formulated as,

$${\mathcal{G}}({\bf{I}})=\left|{\bf{I}}\odot \frac{\partial {\mathcal{F}}({\bf{I}})}{\partial {\bf{I}}}\right|+\mathop{\sum }\limits_{\ell =1}^{L}\mathop{\sum }\limits_{c=1}^{{C}_{\ell }}\psi \left(\left|{{\bf{B}}}_{\ell }^{(c)}\odot \frac{\partial {\mathcal{F}}({\bf{I}})}{\partial {{\bf{B}}}_{\ell }^{(c)}}\right|\right)$$

(4)

where I denotes the input spectrogram to the network, and \({\mathcal{F}}({\bf{I}})\) represents the scalar network output. The operator ⊙ indicates element-wise multiplication, while \(\frac{\partial {\mathcal{F}}({\bf{I}})}{\partial {\bf{I}}}\) is the gradient of the output with respect to the input. \({{\bf{B}}}_{\ell }^{(c)}\) refers to the bias term for kernel c in layer ℓ (i.e. Eq (1)), with ℓ = 1, …, L, and c ∈ 1, …, Cℓ. \(\frac{\partial {\mathcal{F}}({\bf{I}})}{\partial {{\bf{B}}}_{\ell }^{(c)}}\) is the gradient of the output with respect to this bias term. The function ψ( ⋅ ) denotes an up-sampling operator that projects the bias-level attributions to the spatial resolution of the input, and ∣ ⋅ ∣ takes the element-wise absolute value to generate a non-negative saliency map. The first and the second terms in Eq (4) account for direct and indirect contributions within deep neural networks, respectively. Thus, FullGrad provides more reliable visualizations of the spectrotemporal regions in input signals that drive model predictions. Recent studies indicate that these characteristics align particularly well with ResNet architectures due to FullGrad’s reduced sensitivity to the specificity of individual input features and its comprehensive capability to identify all relevant attributes involved in the decision-making process40. As a result, explanations derived from FullGrad exhibit robustness against minor perturbations, including subtle adversarial noise, while effectively preserving structural features that strongly influence the model’s output. This enhanced interpretability makes FullGrad particularly valuable for interpreting complex deep models like ResNets, an essential requirement in application domains that demand trustworthy explanations and actionable insights, such as biomedical signal analysis.

Given the established correlation between KAEs and osteoarthritis, as well as the limitations of traditional hand-crafted feature-based approaches, there is a compelling need for more advanced, data-driven frameworks that can robustly generalize across clinical and real-world settings. Deep transfer learning with architectures such as ResNet addresses the data scarcity issue and enables powerful hierarchical representations of complex acoustic signals like KAEs. However, to ensure clinical adoption and trustworthiness, integrating explainable AI methods—specifically, FullGrad—provides the crucial transparency needed for model predictions by revealing the acoustically relevant regions influencing diagnostic outcomes. In contrast to prior data-efficient deep learning approaches that focus on video surveillance or image-based disease detection and do not target KAE-based OA diagnosis or detailed spectrotemporal explanations28,29, our work adapts transfer learning-based ResNet models directly to KAE spectrograms and couples them with FullGrad attribution. By uniting these recent advances in deep learning and model interpretability, the application of transfer learning-based ResNet models coupled with FullGrad attribution we present a deep learning framework for more accurate, interpretable, and scalable OA classification from KAE data.

Data collection

This study used a wearable acoustic sensing device design previously developed by Teague et al.41. The system consists of a portable, battery-powered, low-cost unit containing a custom-designed printed circuit board (PCB), a microcontroller, and four piezoelectric contact microphones (BU-23173-000, Knowles, IL, USA). The microphones are chosen because of their validated robustness under clinical noise42, high bandwidth, and scalable size. Microphones were placed medially and laterally to the superior and inferior edges of the patella, following prior literature. Positioning was performed via clinicians to ensure consistent anatomical placement across participants. The contact microphone configuration and data acquisition procedure were identical to studies such as Nichols et al.20 and Richardson et al.43, ensuring the validity and reliability with previous works.

Each subject was asked to perform a set of scripted maneuvers, including flexion-extension (FE), sit-to-stand (STS), and a short walking trial. These trials were designed following the general protocol described by Nichols et al.20, and performed at a standardized pace of approximately 0.25 Hz using a visual timer. All of the recordings were collected in clinical examination rooms or lab environments, which are not isolated from noise, thus capturing real-world conditions that enhance the practical relevance and usability of our approach in typical clinical settings.

Although we collected all three types of maneuvers, we only included the unloaded FE trials in our analysis. This choice was made to minimize the influence of external loading and soft tissue-related acoustic variability, which are more pronounced in STS and walking tasks. By focusing on unloaded FE, we ensured more consistent knee-generated acoustic signatures under controlled conditions. During each session, data from all four microphones were collected simultaneously.

The dataset includes 86 knees from 52 participants (Table 1). Clinical experts labeled 49 knees as healthy and 37 as having osteoarthritis (OA). Knees labeled as OA were classified based on a Kellgren-Lawrence grade of 2 or higher.

An important point to highlight is that a considerable portion of the dataset includes knees from individuals classified as obese (BMI > 30), totaling 31 participants. This makes our work one of the few studies that focus on high-BMI populations in the context of KAEs, alongside studies like44,45. Most of the previous works either did not work with or discarded the data from obese participants46. The reason for this exclusion is due to the way body mass affects knee loading and alters how sound propagates through soft tissue, which is very hard to interpret using traditional methods. These effects often make analysis harder and can reduce signal clarity, especially in KAEs. These effects can change both the propagation and consistency of acoustic signals, making high-BMI cases more difficult to analyze. Nevalainen et al.19 observed that BMI significantly affected both the predictive performance of AE-based models and the robustness of signal acquisition in obese individuals.

Including these high-BMI subjects, spread across the three cohorts, is crucial because obesity is strongly linked to OA progression and is common in clinical settings. We therefore view this multi-cohort composition as a key strength of our study, enabling us to evaluate whether the proposed methods remain effective in a more challenging and realistic scenario.

The inclusion of these high-BMI subjects is crucial as obesity extremely prevalent and is strongly linked to OA incidence and progression. We believe that including this multi-cohort composition strengthens the validity of our approach and forms a key part of our contribution, as it helps test whether the proposed methods still hold in a more challenging and realistic scenario.

Signal preprocessing

KAE signals are collected with a sampling frequency of 46.875 kHz. A band-pass filter between 250 Hz and 4.5 kHz is applied to remove low-frequency movement artifacts and focus on the frequency ranges where the knee acoustic signatures are present.

After filtering, we removed the initial inactive segments to avoid including non-informative signal regions caused by sensor settling or brace adjustment. The onset of voluntary movement was manually annotated using the goniometer signal, based on visual inspection of the knee angle trajectory. Specifically, the start of the first clear FE cycle was labeled by identifying the earliest point at which consistent angular displacement began.

This labeling was performed by an expert observer using synchronized plots of the goniometer and acoustic signals. The approach ensured that only segments corresponding to deliberate movement were retained, improving the temporal alignment between knee motion and associated acoustic emissions.

Following the removal of inactive segments, the signal was converted into a time-frequency representation using Short Time Fourier Transforms (STFT), allowing the classifier to extract temporally localized spectral features relevant to knee acoustic emissions.

We have used four different window and hop-length configurations in our experiments, denoted as N128-H16, N128-H32, N64-H16, and N32-H16. Here, the notation “N#-H#” indicates the STFT parameters, with “N” referring to the window length and “H” representing the hop size, both expressed in samples. Given our sampling frequency of 16 kHz, these configurations correspond to window lengths of 8 ms (128 samples), 4 ms (64 samples), and 2 ms (32 samples), and to hop increments of 2 ms (32 samples) and 1 ms (16 samples). By analyzing spectrograms generated using these varied temporal and spectral resolutions, we aim to investigate how different time-frequency granularities affect the effectiveness of extracting distinctive acoustic-emission patterns from knee sounds relevant for OA assessment.

These choices, in general, align with the nature of the knee sounds themselves, which do not exhibit precise tonal frequencies but instead appear as broadband or band-limited bursts. By favoring high temporal resolution, our approach is more consistent with both the phenomenology of knee acoustic emissions and the analysis strategies adopted in prior OA studies.

After computing the STFT via;

$${\bf{X}}(m,{\omega }_{k})=\mathop{\sum }\limits_{n=-\infty }^{\infty }x[n]\cdot w[n-m]\cdot {e}^{-j{\omega }_{k}n},$$

(5)

where x[n] is the KAE signal, w[n] is a window function (Hanning window in our case), m denotes the center time index of the window, and ωk is the k-th frequency bin, we retained only the real-valued part (i.e. \(\Re \left\{{\bf{X}}(m,{\omega }_{k})\right\}\)) of the resulting spectrograms to reduce the complexity while preserving temporal-spectral structure. To compress the dynamic range of the signal and reduce the influence of high-magnitude outliers, the spectrograms that will be provided to the deep learning models were log-scaled as,

$${{\bf{X}}}_{\log }(m,k)=\log \left(1+| \Re \left\{{\bf{X}}(m,{\omega }_{k})\right\}| \right).$$

(6)

To emphasize periods of knee acoustic emission and suppress regions dominated by noise or silence, we introduced an envelope-based weighting scheme. Specifically, for each KAE recording, we first computed the absolute value (rectified) signal and identified its local amplitude peaks. An envelope function was then derived by performing cubic spline interpolation between these peaks, resulting in a smoothly varying envelope signal xe[n]. Subsequently, this continuous envelope was segmented into overlapping frames consistent with the STFT spectrogram framing parameters and averaged within each frame, yielding a frame-based envelope vector e[m] as:

$${x}_{e}^{{\prime} }[m]=\frac{1}{N}\mathop{\sum }\limits_{n=mH}^{mH+N-1}{x}_{e}[n],$$

(7)

where N and H represent the frame (window) and hop lengths (in samples), respectively.

Next, the frame-based envelope values were normalized to zero mean and unit standard deviation and rectified at zero to ensure non-negativity:

$${e}_{+}[m]=\max \left(0,\,\frac{{x}_{e}^{{\prime} }[m]-{\mu }_{e}}{{\sigma }_{e}}\right),$$

(8)

with μe and σe denoting the mean and standard deviation of \({x}_{e}^{{\prime} }[m]\), respectively.

Finally, to enhance temporal regions associated with meaningful knee acoustic events, we weighted each time frame of the log-spectrogram \({{\bf{X}}}_{\log }(m,k)\) by the corresponding normalized envelope frame value e+[m], yielding the final weighted spectrogram representation \({\widetilde{{\bf{X}}}}_{\log }(m,k)\),

$${\widetilde{{\bf{X}}}}_{\log }(m,k)={{\bf{X}}}_{\log }(m,k)\cdot {e}_{+}[m],\,\forall k.$$

(9)

This envelope weighting step emphasizes spectro-temporal regions that correspond to genuine acoustic emission events. The resulting weighted spectrograms, \({\widetilde{{\bf{X}}}}_{\log }(m,k)\), were subsequently provided as input representations to the deep neural network.

Model architecture, training, and interpretability with explainable AI

Given the demonstrated effectiveness of CNNs across a wide range of domains—including, but not limited to, biomedical applications—we have adopted a CNN-based architecture as the baseline model in our study. As our main model for classification among those CNNs, we used the ResNet-1821. It stands on a good point between depth and efficiency, which makes it practical for most of the cases specifically for biomedical or audio classification applications47,48,49.

The original ResNet-18 model expects a 3-channel input. We adapted the model for our single-channel spectrograms by decreasing the number of input channels to 1. Additionally, the original ResNet-18 ends with a fully connected layer designed for 1000-class classification implemented as a 1000-dimensional softmax output. Since our task is binary classification (OA vs. Healthy), we replaced this layer with a new classification head consisting of a global average pooling layer followed by a single fully connected neuron with sigmoid activation. This configuration enables the network to aggregate spatially distributed feature responses into a scalar probability, making it suitable for binary decision-making.

Since the number of samples in our dataset is limited, we employed a transfer learning strategy by reusing the ResNet-18 model whose weights are pre-trained on the ImageNet dataset50,21. Rather than updating all of the parameters, we gradually unfreeze and train those parts of the model for our problem. This approach helps us reduce the variance by decreasing the number of trainable parameters, while preserving the general and low-level feature extractions from a large-scale image data from ImageNet. Given our dataset size, this approach gives us a sweet spot between the model capability and generalization. Figure 2 illustrates the overview of our methodology. Table 2 details the proportion of trainable parameters for different numbers of unfrozen layers, while Table 3 specifies which layers are incrementally unfrozen during training.

The sound signal is first band-pass filtered and transformed into a spectrogram using STFT. This spectrogram is then fed into a partially fine-tuned ResNet-18, where only the final residual blocks are updated. During inference, gradient-based attribution (FullGrad) is applied to produce frequency-time saliency maps that highlight the regions contributing most to the model’s decision, enhancing explainability.

The proposed deep transfer learning models were trained using the binary cross-entropy loss and optimized with the Adam optimization algorithm. We explored several hyperparameter configurations to identify the optimal training setup. Initial learning rates for training ranged from 1 × 10−5 to 1 × 10−3. A learning rate scheduler (“ReduceLRonPlateau”) was applied to further ensure effective convergence; specifically, the learning rate was reduced by a factor of 0.5 whenever the validation F1-score failed to improve over a period of 20 consecutive epochs. The experiments had varying epochs settings, ranging from 150 to 600 epochs, determined by the initial learning rate and number of trainable network layers. Due to class imbalance in the dataset (37 OA versus 49 healthy knees), class weighting was integrated directly into the binary cross-entropy loss function to ensure unbiased model decision-making.

For robust and reliable performance evaluation, experiments were repeated across multiple random seeds for both data shuffling (seeds 0, 5, 10, 16) and model initialization (seeds 100, 200). For each combination of spectrogram and model configuration, all training runs were conducted across the full cross-product of these seed values.

To ensure robust and unbiased assessment of model performance, we used a subject-wise hold-out validation strategy. Each individual may have multiple visits and multiple recordings for both knees; therefore, all data from a given subject were assigned exclusively to either the training, validation, or test set to prevent data leakage. The dataset was partitioned into 60% training, 20% validation, and 20% test subsets.

For benchmarking, we compared the proposed deep transfer learning framework against two widely-used traditional machine learning models: Random Forest and a simple z-score based classifier, which predicts sample class membership by comparing the z-scored mean and standard deviation of each class in the training set. This comparative analysis enables a direct evaluation of the performance improvements offered by our approach in relation to established baseline methods.

As discussed in Related Work Section, the FullGrad algorithm has demonstrated superior performance and reliability compared to other state-of-the-art XAI approaches. Therefore, we adopted FullGrad to interpret our deep transfer learning model and to provide clear insights into the decision-making process. Specifically, we applied FullGrad to generate saliency maps highlighting the most influential time-frequency regions of the knee acoustic emissions that guided the model’s osteoarthritis classification decisions. These visual explanations enabled us to verify that the model’s predictions were based solely on physiologically meaningful features rather than artifacts or background noise, thus enhancing the trustworthiness and transparency of the proposed deep learning framework in clinical and practical applications.

link